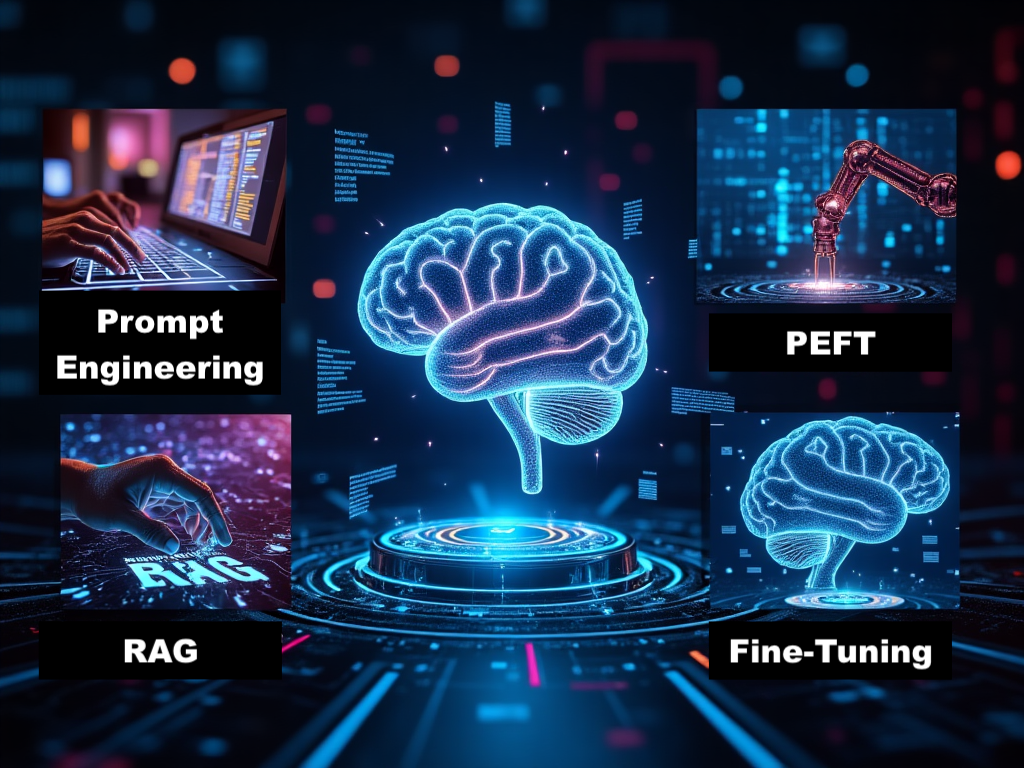

Prompt Engineering or Prompting is the process of structuring or crafting an instruction or prompt in order to produce the best possible output from a generative artificial intelligence (AI) model. A prompt is natural language text describing the task that an AI should perform. A prompt for a text-to-text language model can be a query, a command, or a longer statement including context, instructions, and conversation history. (Wikipedia).

Read More